Updated July 2018

There’s a right and wrong time to use any technology. The serverless frameworks that are offered by AWS, Azure, and Google have many common traits; massive scalability, simple deployments, and pricing models. And while they are similar in those respects, there are particularities to each of them. This article focuses on when to use AWS Lambda with C# and .NET Core.

Let’s dig in to the conditions and use cases where Lambda provides a tangible benefit to the business — from either a technical, business, or development perspective.

Low To Medium Memory Usage

Memory is a precious and expensive resource when it comes to Lambda. AWS doesn’t make it easy to reason about its exact cost with “Gigabyte-seconds” billing but it is fairly clear that a function with a high memory footprint is going to be pricey under very heavy load.

The cost of a 100ms execution just about doubles every time you double memory usage so from a financial perspective, increasing memory as the needs of the Lambda grow does not scale well. To keep memory usage down, reference as few assemblies as possible since every dependency increases the memory requirements of the Lambda. A good way to keep references to a minimum is to have every Lambda do only one thing. A Lambda with a single responsibility won’t need as many assemblies — or nearly as much memory — to get its work done.

Some common types of low memory usage Lambdas are webhooks, event handlers, and CRUD APIs. Other types of APIs can also be built with .NET Lambdas, so long as you’re not too worried about the next criteria on the list.

Response Times Aren’t Critical

The languages supported by Lambda can be broken into two major categories: statically typed and dynamically typed. C#, being a statically typed language, suffers the same fate as Java with slower startup times and larger memory footprints to accomplish the same task with Nodejs or Python.

A Lambda function that is already in memory can spawn another instance incredibly quickly. But a function that isn’t already in memory has to do a cold start that incurs a significant amount of overhead. A cold start of a Lambda is like starting your car on a cold winter morning: the battery works a lot harder to turn the engine. But if the engine is already warm it’ll be able to get it going with much less electrical current.

C# functions are among the costliest in terms of start up time, making them more attuned to background asynchronous work rather than low-latency APIs.

If you plan on using Lambda for a C# API and need lightning fast response times, there are some steps you can take:

- Increasing the amount of memory your Lambda can consume helps drastically reduce cold start time. Going back to the car battery analogy, it’s like starting a car at -30C with a 600 amp battery (starting almost like normal conditions) versus a 300 amp battery (you’ll have to crank the engine for awhile before it catches).

- Create a second Lambda who’s sole job is to ping the first one every few minutes to ensure that it rarely incurs a cold start. However, cold starts will still be incurred if and when the Lambda function scales out to keep up with demand.

The bottom-line is that when response times are critital then a language with a low cold-start time (such as Nodejs or Python) should be preferred over C#.

Variable Loads That Are Hard To Predict

Before serverless came around, you needed some kind of always-on charged-hourly infrastructure to host APIs and background workers, regardless of how much traffic it actually received. That’s not cost effective for an app that only gets a few hits an hour. Lambdas are great for these types of low traffic situations because you only pay for what you use, which in the case of an app that is only called a few times per hour is next to nothing (and could be part of the free tier).

An alternative to Lambda for a low traffic API is to host it on a small machine like a t1.micro. This can be problematic if the application suddenly has an unexpected peak in traffic. While it is fairly easy to set up auto-scaling of instances, Lambda protects you from these scenarios automatically by taking care of scaling for you.

Willingness To Upgrade Frequently

.NET Core is evolving quickly and AWS are keeping up with that pace. On July 9 2018, they released support for the .NET Core 2.1 runtime, and at the same time announced that the creation of .NET Core 2.0 Lambdas will be deprecated by the beginning of January. Updating existing .NET Core 2.0 Lambdas will be deprecated three months after that. And while upgrading from 2.0 to 2.1 isn’t a huge deal, upgrading dozens — if not hundreds — of Lambdas is still time that’s not spent building business requirements.

It’s a safe bet to assume that .NET Core 3 will be available on Lambda not too long after it’s released. You’ll be forced to upgrade to take advantage of the improvements it brings. That’s a quick pace of change that needs to be accounted for when developing .NET Core Lambdas.

Heavily Invested In AWS

It’s possible to port Lambdas to another serverless technology without resorting to a complete rewrite, assuming that the language is supported on both cloud providers. However, .NET Core/C# is only supported on AWS and Azure so if cross-cloud compatability is important for you, go with Node.js instead. It’s the only language supported across AWS, Azure, and Google.

Lambdas also tend to leverage other services such as a Step Functions to coordinate workflows of multiple functions that act on databases, queues, and file storage. Since all these APIs are specific to AWS, moving serverless-based tech stacks to another cloud provider would be costly with no return on investment.

Building Proof of Concepts and Prototypes

It doesn’t take much time to write and deploy Lambdas. The quickstart examples and Visual Studio templates help beginners get going with Lambda in no time. Once the code is written, deployments can be done directly from Visual Studio or zipped build artifacts can be uploaded to the AWS Console. And since January 2018, it’s even possible to run an entire ASP.NET Core Web Application within a Lambda with very few changes to the project’s configuration.

That makes Lambda a great tool to quickly put together a proof of concept or prototype to validate business assumptions, ensure the technical feasibility of a project, and get it into users’ hands with as little delay as possible. If the project goes forward, it can be tweaked to run on Lambda more effectively, or moved to some other hosting technology as the requirements of the application change.

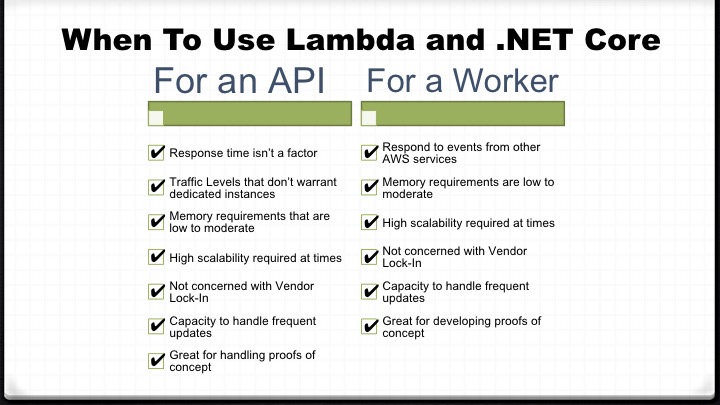

TL;DR

Here’s a cheat sheet that summarizes all the points discussed above:

3 comments